Does Your Theory of Winning Actually Match the Market?

March 18, 2026 BlogIn competitive markets, companies don’t just compete on products or price. They compete on something more fundamental: their…

In November of 2022, OpenAI released ChatGPT, a generative AI writing tool, sending shockwaves through education. While generative AI technology is not new, ChatGPT has made it accessible, intuitive, and free, catapulting the tool into the mainstream spotlight.

Following the release of ChatGPT, Tyton Partners fielded a national survey in February and March 2023 as part of our longitudinal research series, Time for Class, to gather the perspectives of students, instructors, and administrators in higher education.

The 2,000 instructors and administrators surveyed reported concern and fear over issues of academic integrity brought on by generative AI tools. “Preventing student cheating” jumped to the top instructional challenge reported by instructors in 2023, up from the 10th in 2022. Despite this concern, institutions have been slow to respond with policy changes: only 3% of institutions have developed a formal policy regarding the use of AI tools, and most (58%) indicated they will begin to develop one “soon.” Instructors and institutional leaders are grappling with fundamental questions about how to use writing as an assessment in a world where former approaches to ensuring academic integrity are radically changed.

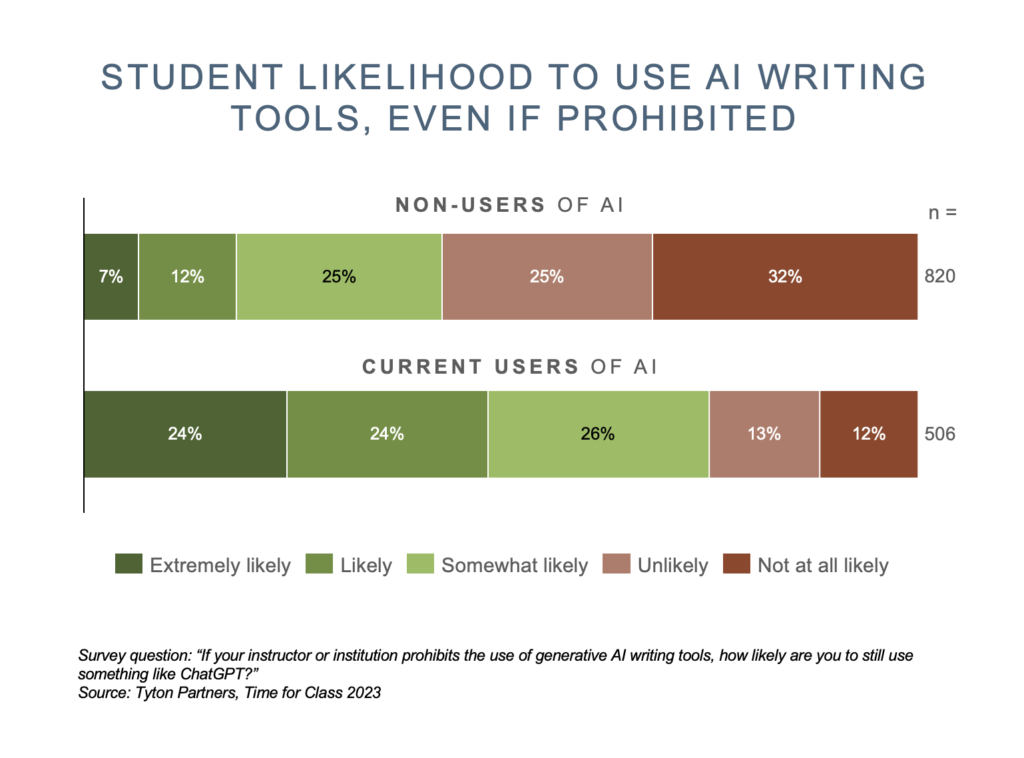

Our national survey of 2,000 2- and 4-year college students made it clear that there is no turning back now: 51% of students will continue to use generative AI tools even if it were prohibited by their instructors or institutions. For the 27% of students that are currently using generative AI tools, that number jumps to 69%, demonstrating the value students see and are gaining from these tools.

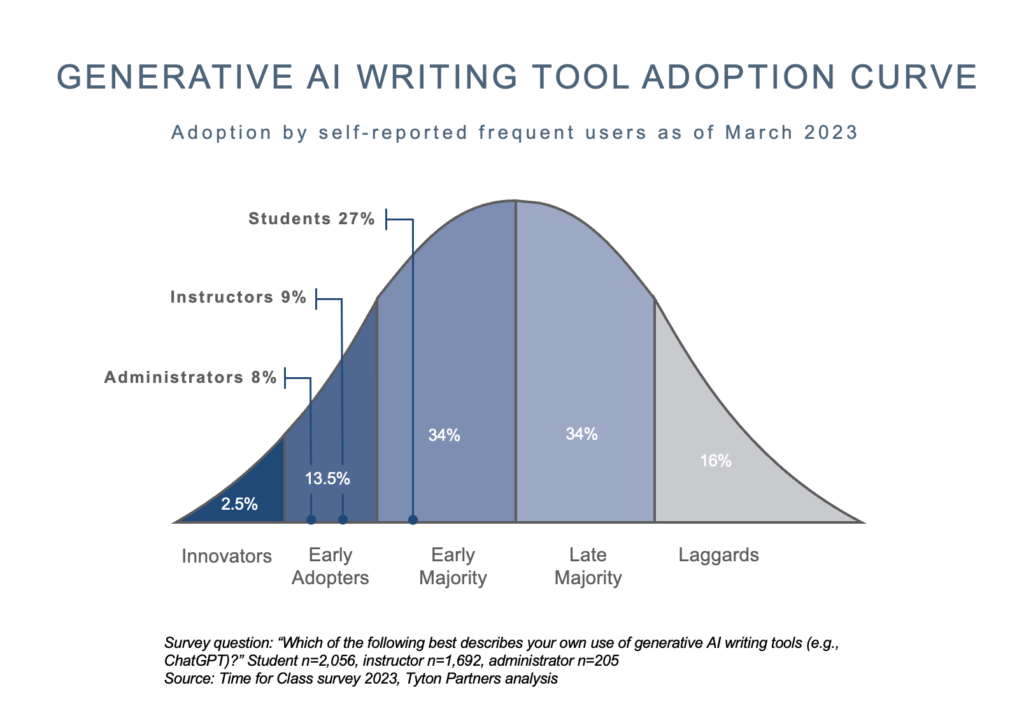

While institutional stakeholders debate next steps, students are flying up the adoption curve. Within just 100 days of ChatGPT’s launch, nearly one in three students reported being a regular user of generative AI tools – a rate of growth we have, quite literally, never seen before.

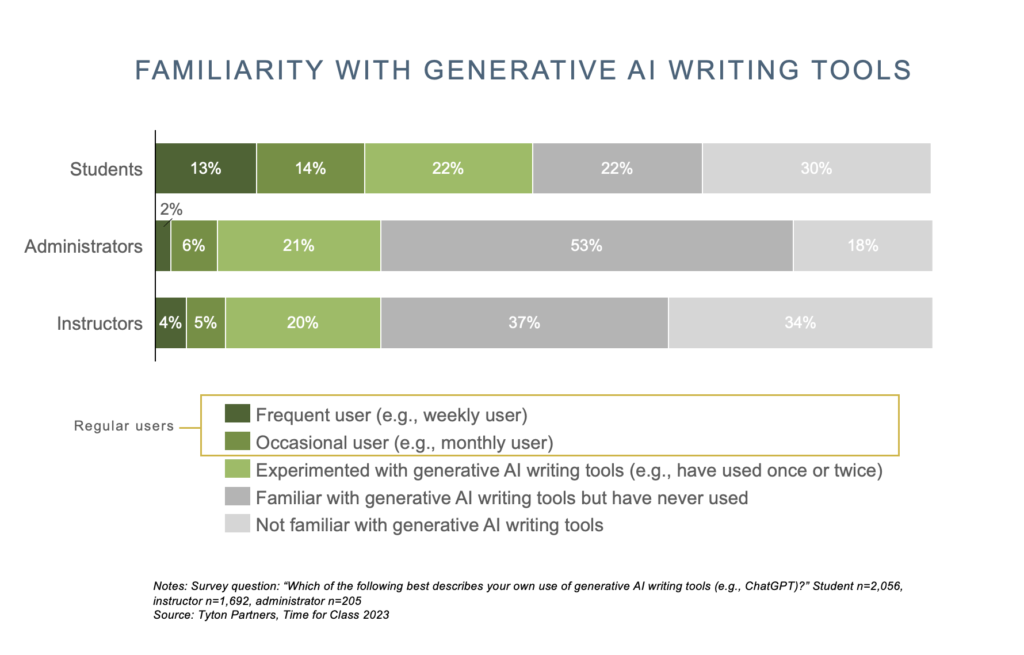

An even greater number of students (48%) have tried AI writing tools at least once, whereas 71% of instructors and administrators have never used these tools, with 32% reporting that they are not even aware of these tools.

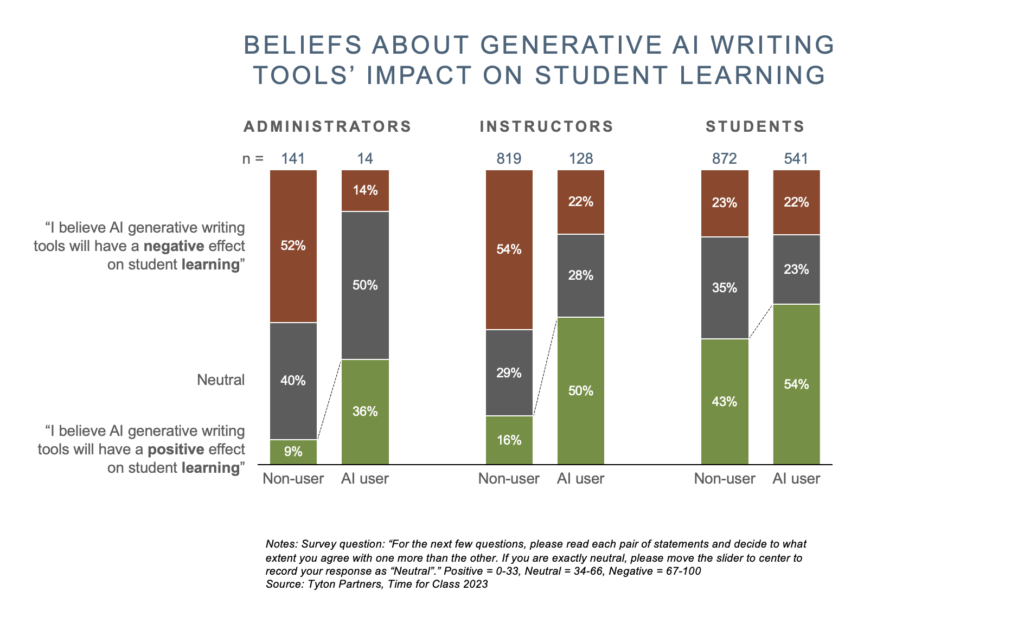

Instructors, administrators, or students who have experimented with generative AI tools are far more likely to recognize the tools’ potential value in education and advocate for policies and practices at the institution-level that enable “responsible use” of generative AI tools as part of teaching and learning. Said differently, when educators or students create an account and experiment with generative AI tools firsthand, their perspective on the tool’s potential for positive learning outcomes changes.

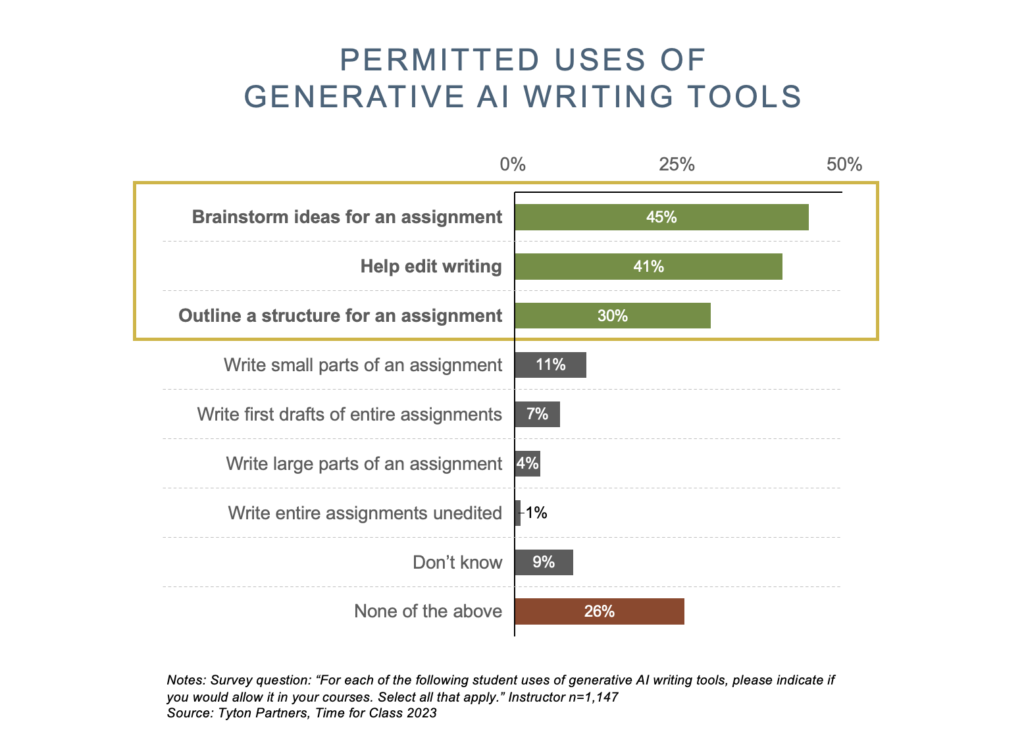

The early adopter instructors that are regularly using these tools are opting to make instructional changes to their courses as they find ways to integrate AI into their teaching methods. Currently, many instructors report drawing the line at using the tools to generate text, whereas non-generative uses of these AI tools (e.g., brainstorming, editing, and outlining) are seen as more permissible.

Though these tools pose a threat to the integrity of conventional summative assessments, they also can serve as a catalyst for a pedagogical shift that emphasizes the student’s process rather than just the final product. Taking writing as an example, it’s increasingly vital for instructors to gain insight into students’ writing processes – including outlining, brainstorming, and revising – all steps that generative AI tools can support. Necessitated or not, refocusing on the writing process and the “how” has the potential to improve writing instruction, not destroy it.

When integrating generative AI into assignments, it should be approached with an intimate understanding of the technology’s limitations – asking “what am I attempting to assess with this assignment that is uniquely human?” is a helpful question for instructors to consider when adapting assignments. For example, one political science instructor told us they were having students interview local politicians, and then use ChatGPT to write an essay summarizing the politicians’ views, and if they were electable in the current political environment. In this example, the instructor is letting students outsource the actual writing process, and instead refocusing the students to both conduct a thoughtful interview, and read complex political subtext, both tasks that AI tools are not well suited for.

As some instructors adapt, 35% of non-user instructors indicate having made no changes to their courses in response to the release of new generative AI tools. While it is too early to recommend best practices, there is clearly a need to experiment, collaborate and make informed decisions about future policy and use of generative AI technology.

A few institutions are assembling task forces, hosting webinars, and organizing information sessions to educate instructors and administrators about this technology. The head of one such task force shared, “we begin every meeting not with slides, but by pulling up ChatGPT on a screen and experimenting with it live. I watch jaws drop. Once they’ve seen the tool, the discussion is very different.”

At their core, generative AI tools like ChatGPT are just that: tools. They are incredibly powerful and can be harnessed by students and instructors to either improve education or to rob students of foundational skills. The path forward will require an iterative approach, but for higher education to make informed decisions about where and how to monitor or integrate, the 71% of instructors and administrators who have yet to try generative AI tools need to spend hands-on time with these tools. Outside of campus, education companies and service providers can explore ways to assist instructors in monitoring, regulating, and integrating AI, potentially offering resources, and facilitating conversations.

Only once all parties have a sufficiently deep understanding of generative AI tools will we be able to engage in thoughtful discourse and experimentation around the future of this technology in education.

As always, we welcome the opportunity to continue this discussion. Reach out to us if we can help your organization and its digital learning strategy.